Abstract

We introduce Wan-Animate, a unified framework for character animation and replacement. Given a character image and a reference video, Wan-Animate can animate the character by precisely replicating the expressions and mp4ements of the character in the video to generate high-fidelity character videos. Alternatively, it can integrate the animated character into the reference video to replace the original character, replicating the scene's lighting and color tone to achieve seamless environmental integration. Wan-Animate is built upon the Wan model. To adapt it for character animation tasks, we employ a modified input paradigm to differentiate between reference conditions and regions for generation. This design unifies multiple tasks into a common symbolic representation. We use spatially-aligned skeleton signals to replicate body motion and implicit facial features extracted from source images to reenact expressions, enabling the generation of character videos with high controllability and expressiveness. Furthermore, to enhance environmental integration during character replacement, we develop an auxiliary Relighting LoRA. This module preserves the character's appearance consistency while applying the appropriate environmental lighting and color tone. Experimental results demonstrate that Wan-Animate achieves state-of-the-art performance. We are committed to open-sourcing the model weights and its source code.

Method Overview

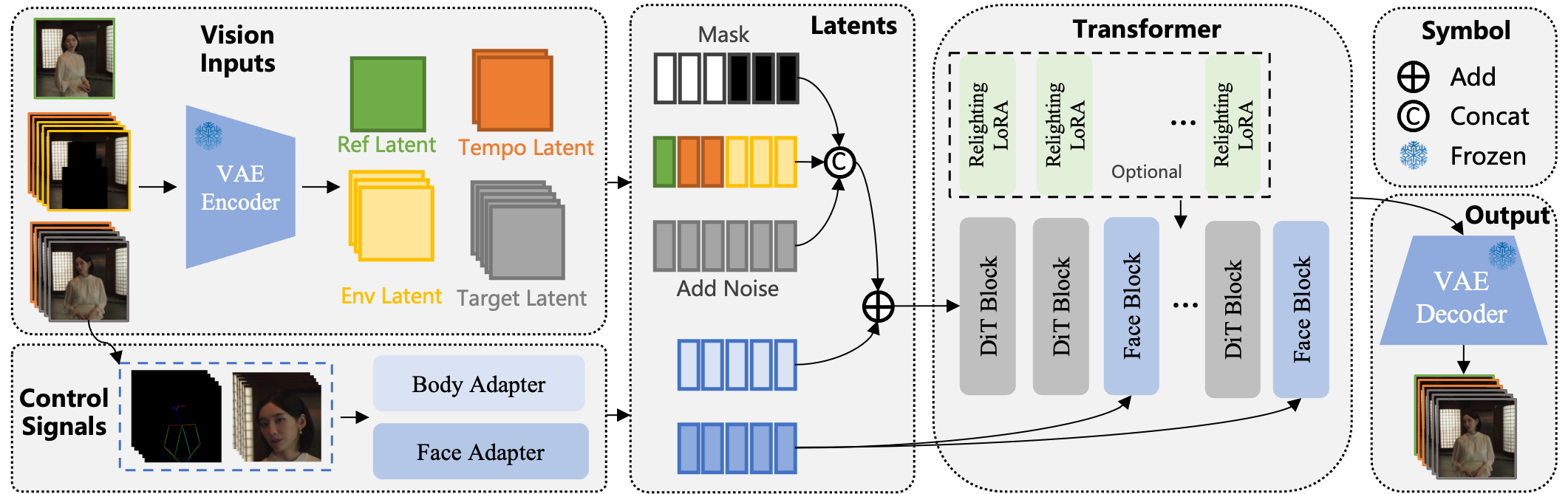

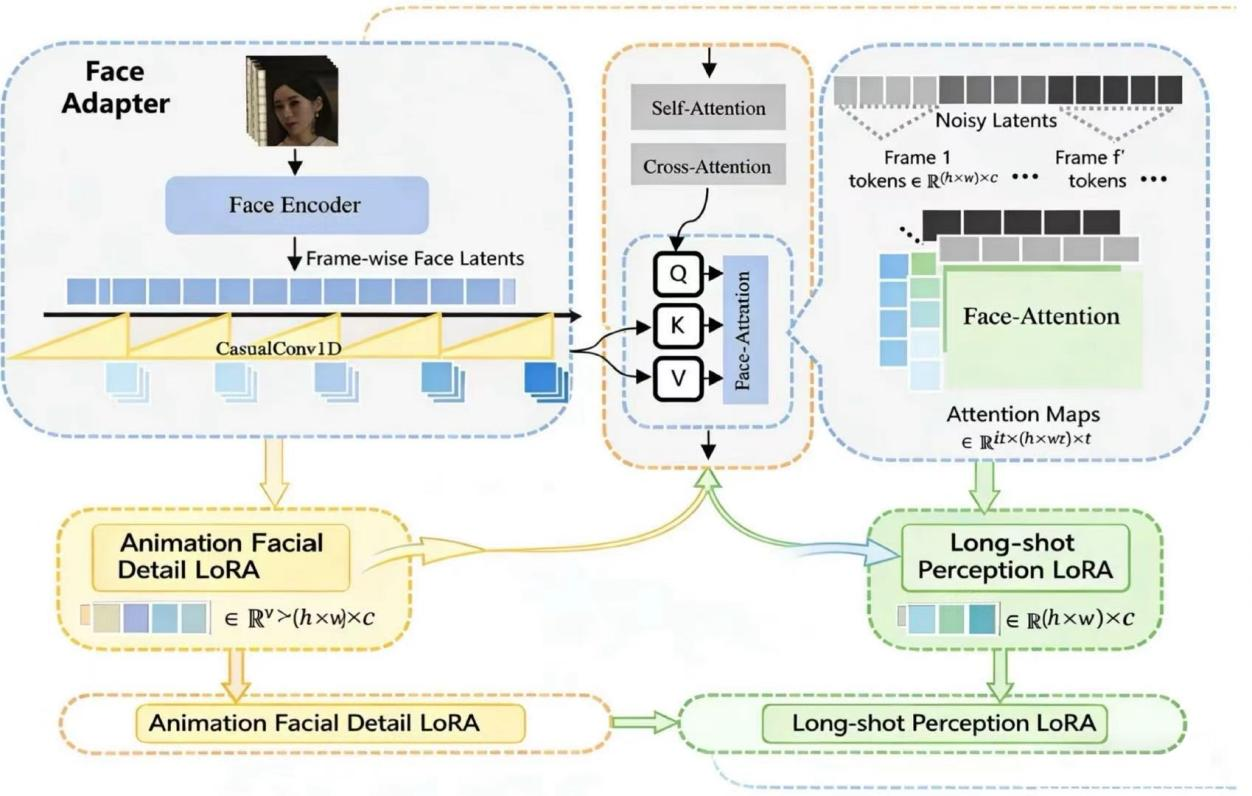

Overview of Double-Lora Digital Human. Overview of Wan-Animate, which is built upon Wan-I2V. We modify it s input formulation to unify reference image input, temporal frame guidance, and environmental information (for dual-mode compatibility) under a common symbolic representation. For body motion control, we use skeleton signals that are merged via spatial alignment. For facial expression control, we leverage implicit features extracted from face images as the driving signal. Additionally, for character replacement, we train an auxiliary Relighting LoRA to enhance the character's integration with the new environment.

Diversity-Styled Videos

Our model is trained on a cultural database and excels at generating culturally-styled videos.

Advantages

Our model can detect human skeletons to generate dynamic images.

Our model can flexibly choose to use either an image or a video as the background.

Our model can output audio.

Comparing to SOTA Methods

Our method generates results with fine-grained motions, identity preservation, temporal consistency and high fidelity.

Pose Transfer

Portrait Animation

BibTeX

@article{luo2025dreamactor,

title={Double-Lora Digital Human: Holistic, Expressive and Robust Human Image Animation with Hybrid Guidance},

author={Luo, Yuxuan and Rong, Zhengkun and Wang, Lizhen and Zhang, Longhao and Hu, Tianshu and Zhu, Yongming},

journal={arXiv preprint arXiv:2504.01724},

year={2025}

}